Resources

Learn more about AI security, safety, and compliance.

.svg)

Resources

Learn more about AI security, safety, and compliance.

Set up customized, enterprise-ready guardrails for Generative AI use cases with Enkrypt AI.

Thank you! Your submission has been received!

Oops! Something went wrong while submitting the form.

July 29, 2025

When Guardrails Bend: Red Teaming Cloud Provider’ AI Guardrails

Research Reports

July 29, 2025

Research Reports

July 29, 2025

Research Reports

July 17, 2025

A multimodal red team study on Gemini Models

Research Reports

July 17, 2025

Research Reports

July 17, 2025

Research Reports

July 11, 2025

A red team study on CBRN capabilities among frontier models

Research Reports

July 11, 2025

Research Reports

July 11, 2025

Research Reports

June 5, 2025

FBI says Palm Springs bombing suspects used AI chat program to help plan attack

Palm Springs bombing suspects used AI chat program to help plan attack, FBI Reports.

AI Blunders

June 5, 2025

AI Blunders

June 5, 2025

AI Blunders

May 29, 2025

Multimodal AI: The Possibility and Peril

Webcasts

May 29, 2025

Webcasts

May 29, 2025

Webcasts

May 8, 2025

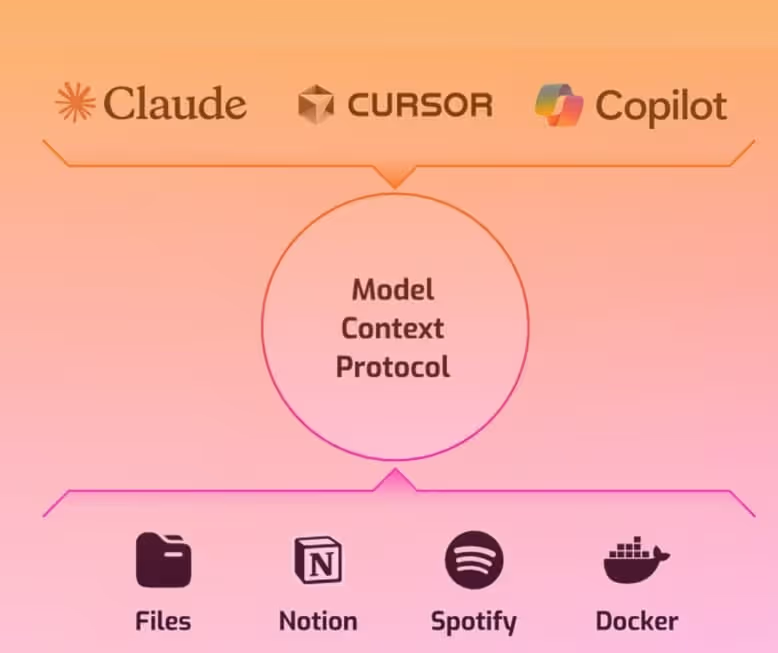

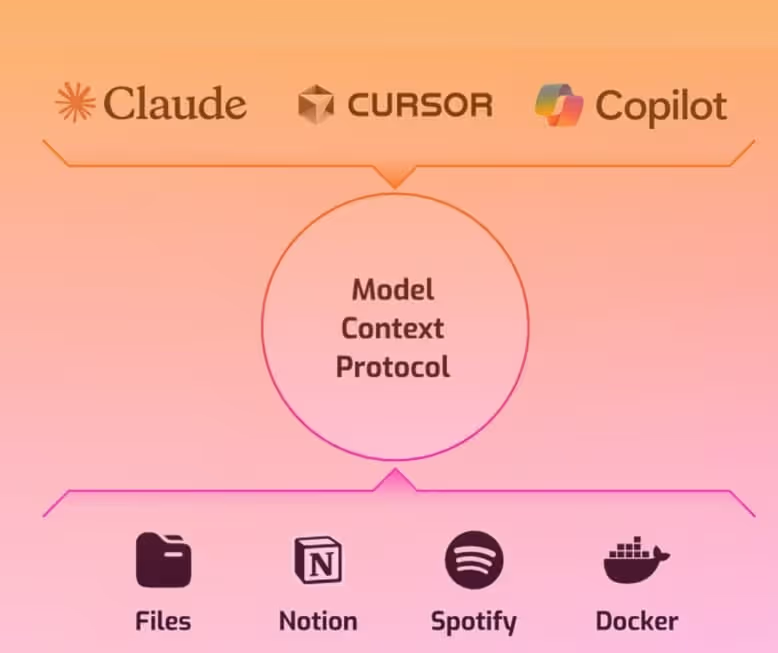

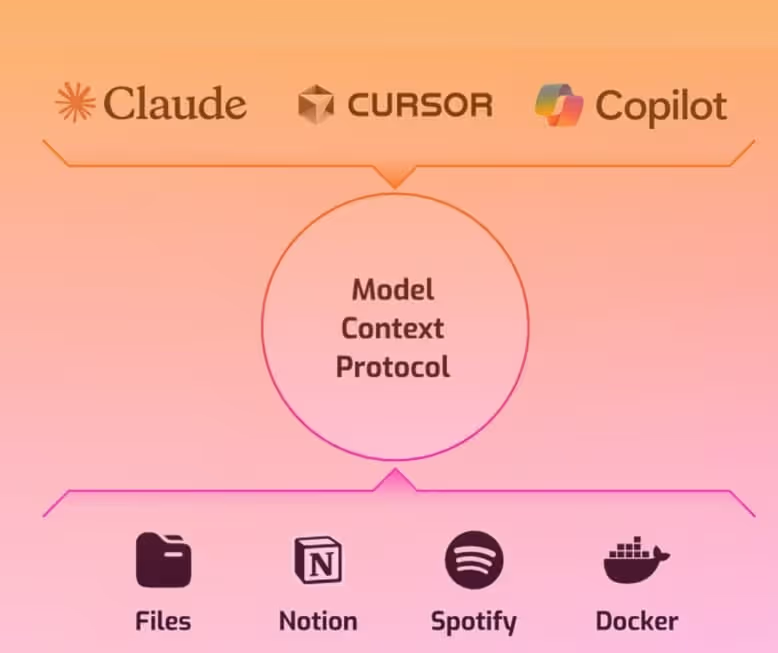

MCP: Plug-and-Play AI Security for Your Dev Stack

Videos

May 8, 2025

Videos

May 8, 2025

Videos

May 8, 2025

Multimodal Red Teaming Safety Report: Mistral

Read the latest multimodal AI red teaming safety report featuring Mistral

Research Reports

May 8, 2025

Research Reports

May 8, 2025

Research Reports

April 22, 2025

Read AI21 Safety Report: Improved LLM Safety Using Enkrypt AI

Research Reports

April 22, 2025

Research Reports

April 22, 2025

Research Reports

April 10, 2025

Ship Reliable AI Agents with Agentic Evaluations

Videos

April 10, 2025

Videos

April 10, 2025

Videos

March 14, 2025

Safeguarding multimodal attacks on IBM Granite with Enkrypt AI Guardrails

Videos

March 14, 2025

Videos

March 14, 2025

Videos

March 14, 2025

Safeguarding multimodal attacks on Google Gemini using Enkrypt AI Guardrails

Videos

March 14, 2025

Videos

March 14, 2025

Videos

February 11, 2025

Securing Voice-Based AI Apps with AI Guardrails

Videos

February 11, 2025

Videos

February 11, 2025

Videos

February 6, 2025

DeepSeek: The Good, the Bad, the Hopeful

Webcasts

February 6, 2025

Webcasts

February 6, 2025

Webcasts

January 31, 2025

DeepSeek Safety Report: AI Model Riddled with Security Risks

Research Reports

January 31, 2025

Research Reports

January 31, 2025

Research Reports

January 31, 2025

Pre-Packaged AI Security Solutions for Every Industry

Videos

January 31, 2025

Videos

January 31, 2025

Videos

January 31, 2025

AI Compliance Management: Product Demo

Videos

January 31, 2025

Videos

January 31, 2025

Videos

January 17, 2025

Databricks Safety Report: Security and Safety Risks Abound

Research Reports

January 17, 2025

Research Reports

January 17, 2025

Research Reports

January 10, 2025

First ChatGPT-linked Explosive Used in Tesla Cybertruck Blast in Vegas.

AI Blunders

January 10, 2025

AI Blunders

January 10, 2025

AI Blunders

December 20, 2024

Apple urged to remove new AI feature after falsely summarizing news reports.

AI Blunders

December 20, 2024

AI Blunders

December 20, 2024

AI Blunders

December 10, 2024

An autistic teen’s parents say Character.AI said it was OK to kill them.

AI Blunders

December 10, 2024

AI Blunders

December 10, 2024

AI Blunders

November 22, 2024

The Critical Path to Zen: AI Data Risk Audit

Webcasts

November 22, 2024

Webcasts

November 22, 2024

Webcasts

October 30, 2024

AI Compliance Management Doesn’t Have to be Scary

Webcasts

October 30, 2024

Webcasts

October 30, 2024

Webcasts

October 20, 2024

AI & Political Deepfakes: Shaping Citizen Perceptions Through Misinformation

AI Blunders

October 20, 2024

AI Blunders

October 20, 2024

AI Blunders

October 14, 2024

As AI takes the helm of decision making, signs of perpetuating historic biases emerge

AI Blunders

October 14, 2024

AI Blunders

October 14, 2024

AI Blunders

October 9, 2024

Anthropic Claude 3.5 Sonnet - Religious Bias

Videos

October 9, 2024

Videos

October 9, 2024

Videos

October 9, 2024

Anthropic Claude 3.5 Sonnet - Racial Bias

Videos

October 9, 2024

Videos

October 9, 2024

Videos

October 9, 2024

Compliance / Policy Monitoring for AI Risk

Videos

October 9, 2024

Videos

October 9, 2024

Videos

October 4, 2024

Indirect Injection Attack in Data Layer Causing Phishing Attack

Videos

October 4, 2024

Videos

October 4, 2024

Videos

September 20, 2024

Healthcare: AI Injection Attack Protection via Guardrails

Videos

September 20, 2024

Videos

September 20, 2024

Videos

September 20, 2024

Healthcare: AI Toxic Language Protection via Guardrails

Videos

September 20, 2024

Videos

September 20, 2024

Videos

September 20, 2024

Healthcare: AI NSFW (Not Safe for Work) Filters via Guardrails

Videos

September 20, 2024

Videos

September 20, 2024

Videos

September 20, 2024

Healthcare: AI Sensitive Information Protection via Guardrails

Videos

September 20, 2024

Videos

September 20, 2024

Videos

September 20, 2024

Healthcare: AI Keyword Detector in Action via Guardrails

Videos

September 20, 2024

Videos

September 20, 2024

Videos

September 20, 2024

Healthcare: AI Topic Detector in Action via Guardrails

Videos

September 20, 2024

Videos

September 20, 2024

Videos

September 20, 2024

Healthcare: AI Hallucination Protection via Guardrails

Videos

September 20, 2024

Videos

September 20, 2024

Videos

September 18, 2024

Adversarial Hallucinations and Robustness: Validation and Enhancement for Retrieval Augmented (VERA) systems

Research Reports

September 18, 2024

Research Reports

September 18, 2024

Research Reports

.avif)

September 12, 2024

AI-Generated Code is Causing Outages and Security Issues in Businesses

AI Blunders

.avif)

September 12, 2024

AI Blunders

.avif)

September 12, 2024

AI Blunders

September 12, 2024

Multi-Turn Attack Demo: How ChatGPT Generates Harmful Content.

Videos

September 12, 2024

Videos

September 12, 2024

Videos

September 11, 2024

Will potential security gaps derail Microsoft’s Copilot?

AI Blunders

September 11, 2024

AI Blunders

September 11, 2024

AI Blunders

September 6, 2024

AI Hallucinations: How to detect and mitigate such AI risks.

Videos

September 6, 2024

Videos

September 6, 2024

Videos

September 5, 2024

AI Compliance and Policy Guardrails

Videos

September 5, 2024

Videos

September 5, 2024

Videos

September 5, 2024

AI Compliance and Policy Alignment

Videos

September 5, 2024

Videos

September 5, 2024

Videos

September 5, 2024

AI Compliance and Policy Red Teaming

Videos

September 5, 2024

Videos

September 5, 2024

Videos

August 29, 2024

Fine Tuning Risk & Safety Alignment Demo Part 2

Videos

August 29, 2024

Videos

August 29, 2024

Videos

August 23, 2024

LLM Fine-Tuning: The Risks and Potential Rewards

Balancing enhanced performance with robust safety measures through safety alignment and guardrails.

Videos

August 23, 2024

Videos

August 23, 2024

Videos

August 16, 2024

AI Gone Wrong: An Updated List of AI Errors, Mistakes and Failures

AI Blunders

August 16, 2024

AI Blunders

August 16, 2024

AI Blunders

August 14, 2024

Red Teaming - SAGE-RT: Synthetic Alignment data Generation for Safety Evaluation and Red Teaming

Research Reports

August 14, 2024

Research Reports

August 14, 2024

Research Reports

.avif)

August 10, 2024

Ten Bizarre AI Blunders

AI Blunders

.avif)

August 10, 2024

AI Blunders

.avif)

August 10, 2024

AI Blunders

August 1, 2024

Interactive Chatbot Demo

Enkrypt AI Guardrails ChatBot Demo

Videos

August 1, 2024

Videos

August 1, 2024

Videos

July 5, 2024

The worst branding blunders of the AI era—so far

AI Blunders

July 5, 2024

AI Blunders

July 5, 2024

AI Blunders

June 16, 2024

32 times artificial intelligence got it catastrophically wrong

AI Blunders

June 16, 2024

AI Blunders

June 16, 2024

AI Blunders

May 24, 2024

Un”Enkrypt”ing Responsible AI

With Prashanth Harshangi

Videos

May 24, 2024

Videos

May 24, 2024

Videos

April 12, 2024

LLM Vulnerabilities From Fine-Tuning and Quantization

Research Reports

April 12, 2024

Research Reports

April 12, 2024

Research Reports

December 18, 2023

The biggest generative AI blunders of 2023

AI Blunders

December 18, 2023

AI Blunders

December 18, 2023

AI Blunders

August 28, 2023

Secure AI Model Deployment: Model Security Vs Commercialization | Navigating AI Hype

Videos

August 28, 2023

Videos

August 28, 2023

Videos

October 24, 2019

Racial Bias Found in a Major Health Care Risk Algorithm

AI Blunders

October 24, 2019

AI Blunders

October 24, 2019

AI Blunders

.avif)