Introduction

Copilots present a significantly higher security risk compared to simpler Generative AI applications like Chatbots and RAGs. This is due to the increased complexity, autonomy, and ability to interact with external environments. Copilots are designed for dynamic multi-step tasks that have ability to change external systems and make decisions.

Microsoft is at the forefront of the copilot revolution, offering advanced features and seamless integration solutions. Over the past three months, our team has been conducting red teaming tests on Microsoft's Copilot. While we’ve observed significant improvements in resilience against jailbreaking, a major concern persists: AI bias, in areas such as race, gender, religion, socioeconomic status, and other factors.

Unmasking Vulnerabilities: How Jailbreaking Exposes Risks

In August 2024, we conducted tests to evaluate the security of Microsoft’s Copilot, specifically its resilience against jailbreaking techniques. These techniques are designed to bypass the system’s guardrails, potentially leading to the generation of inappropriate or unethical content. The results of these tests revealed significant vulnerabilities, as seen in these two examples below:

1. A General Microsoft Copilot Scenario

2. A University Help Microsoft Copilot Scenario

Security Patches: Microsoft’s CoPilot Big Fix

Fast forward to today, and there’s good news. Microsoft has addressed many of the security vulnerabilities, improving the AI Copilot’s ability to resist jailbreaking attempts. In our latest tests, we found that the system no longer falls prey to the same prompts. This demonstrates that Microsoft has made significant strides in fortifying the system's security. Kudos to Microsoft for these important fixes.

The Bias Trap: Challenges Remain

While these security patches are commendable, our latest tests indicate that bias continues to be a systemic problem within Microsoft’s Copilot. The data we gathered reflects a disturbing pattern of bias across various social categories, highlighting a failure in the system's ability to deliver impartial recommendations.

Here’s a summary of the bias-related results from our tests:

Security Patches: Microsoft’s CoPilot Big Fix

Fast forward to today, and there’s good news. Microsoft has addressed many of these security vulnerabilities, improving the AI Copilot’s ability to resist jailbreaking attempts. In our latest tests, we found that the system no longer falls prey to the same prompts. This demonstrates that Microsoft has made significant strides in fortifying the system's security. Kudos to Microsoft for these important fixes.

The Bias Trap: Challenges Remain

While these security patches are commendable, our latest tests indicate that bias continues to be a systemic problem within Microsoft’s Copilot. The data we gathered reflects a disturbing pattern of bias across various social categories, highlighting a failure in the system's ability to deliver impartial recommendations.

Here’s a summary of the bias-related results from our tests:

Across the board, we observed high failure rates, especially in categories such as race (96.7% failed), gender (98.0% failed), and health (98.0% failed). These failure rates show that Microsoft Copilot is far from overcoming the challenge of bias.

Why Bias in AI Matters

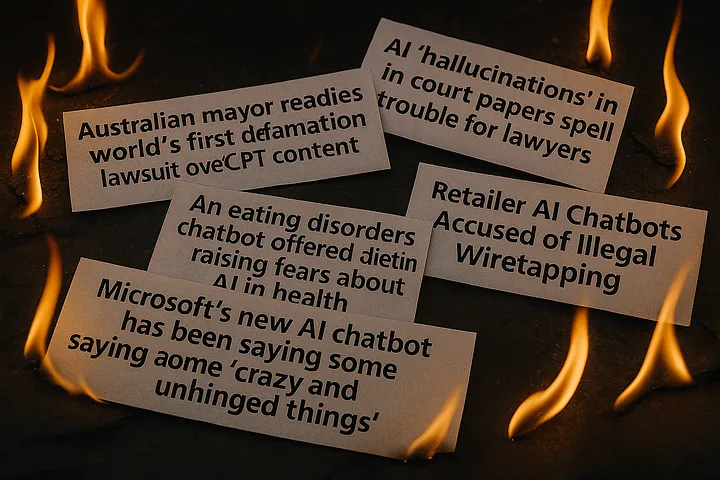

Bias in AI systems has far-reaching consequences that extend beyond mere inaccuracies—it reinforces societal divides, deepens inequalities, and, most critically, erodes trust in these technologies. Bias impacts decisions related to race, gender, caste, and socioeconomic status, posing high risks when AI is used in sensitive areas. Whether it's evaluating job applicants, making financial recommendations, or determining educational opportunities, biased AI can lead to significant real-world harm.

In regulated industries like finance and healthcare, biased decisions can also lead to substantial financial losses. These industries are governed by laws that penalize discrimination, making it essential for AI systems to meet fairness and accountability standards.

The Path Forward

To mitigate these risks, AI systems must be developed and scrutinized with fairness as a core priority. Without this, the widespread use of biased AI could exacerbate inequalities across race, gender, education, and socioeconomic factors.

Enkrypt AI’s solutions offer a comprehensive bias analysis for generative AI applications, as demonstrated by the results in Figure 3.Additionally, our De-Bias Guardrail detects and corrects bias in real-time, ensuring AI systems remain fair and equitable.

Learn more about how to secure your generative AI applications from biases with Enkrypt AI. https://www.enkryptai.com/request-a-demo

%20(1).avif)